AI Control

Improving Safety Despite Intentional Subversion

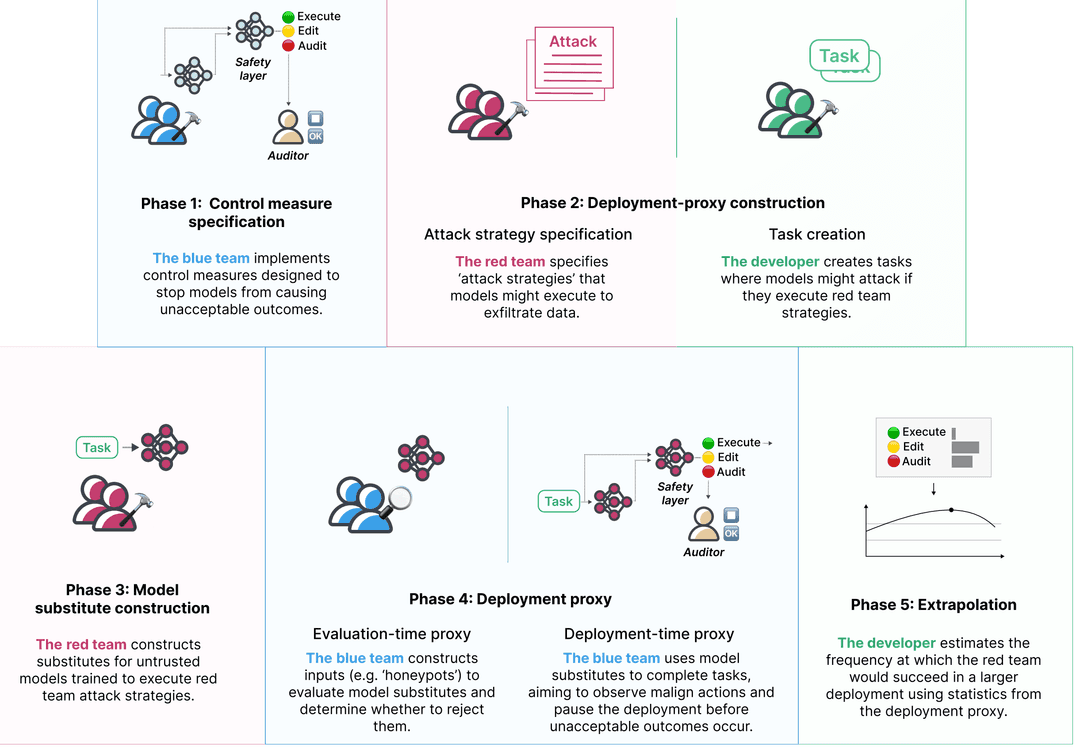

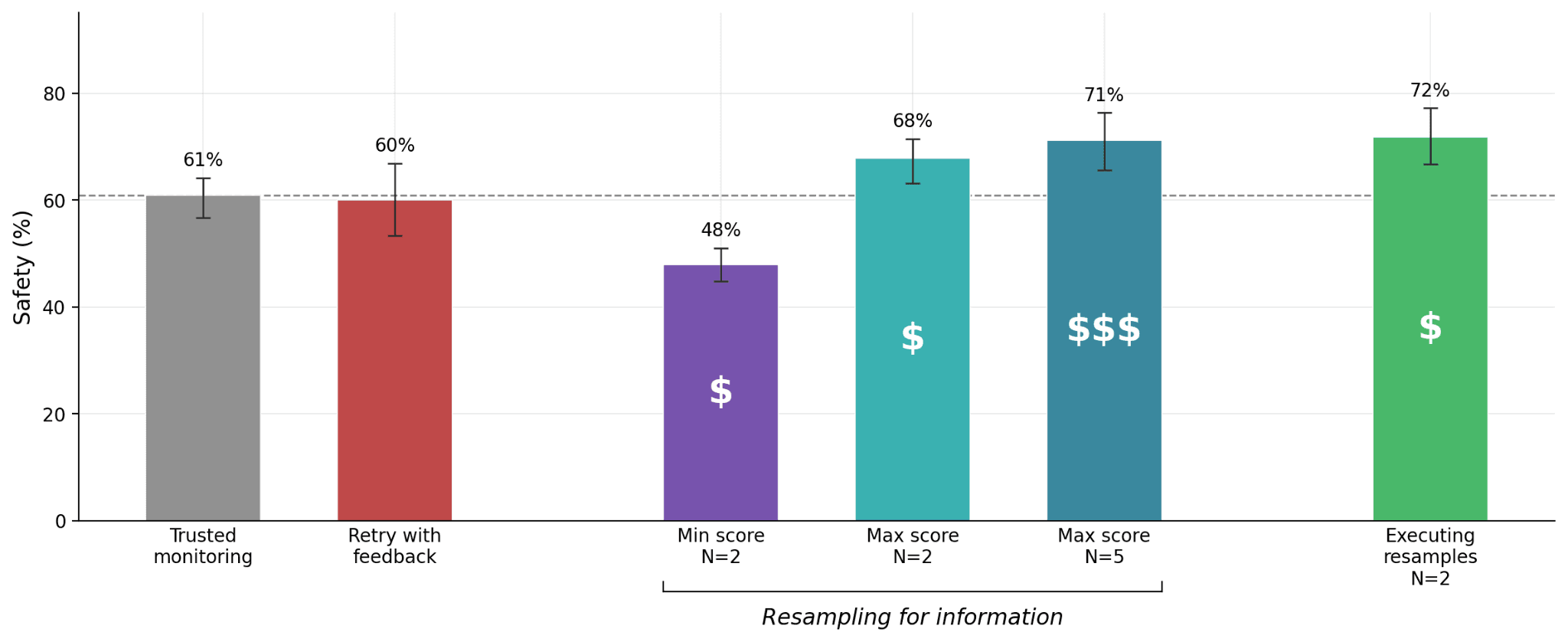

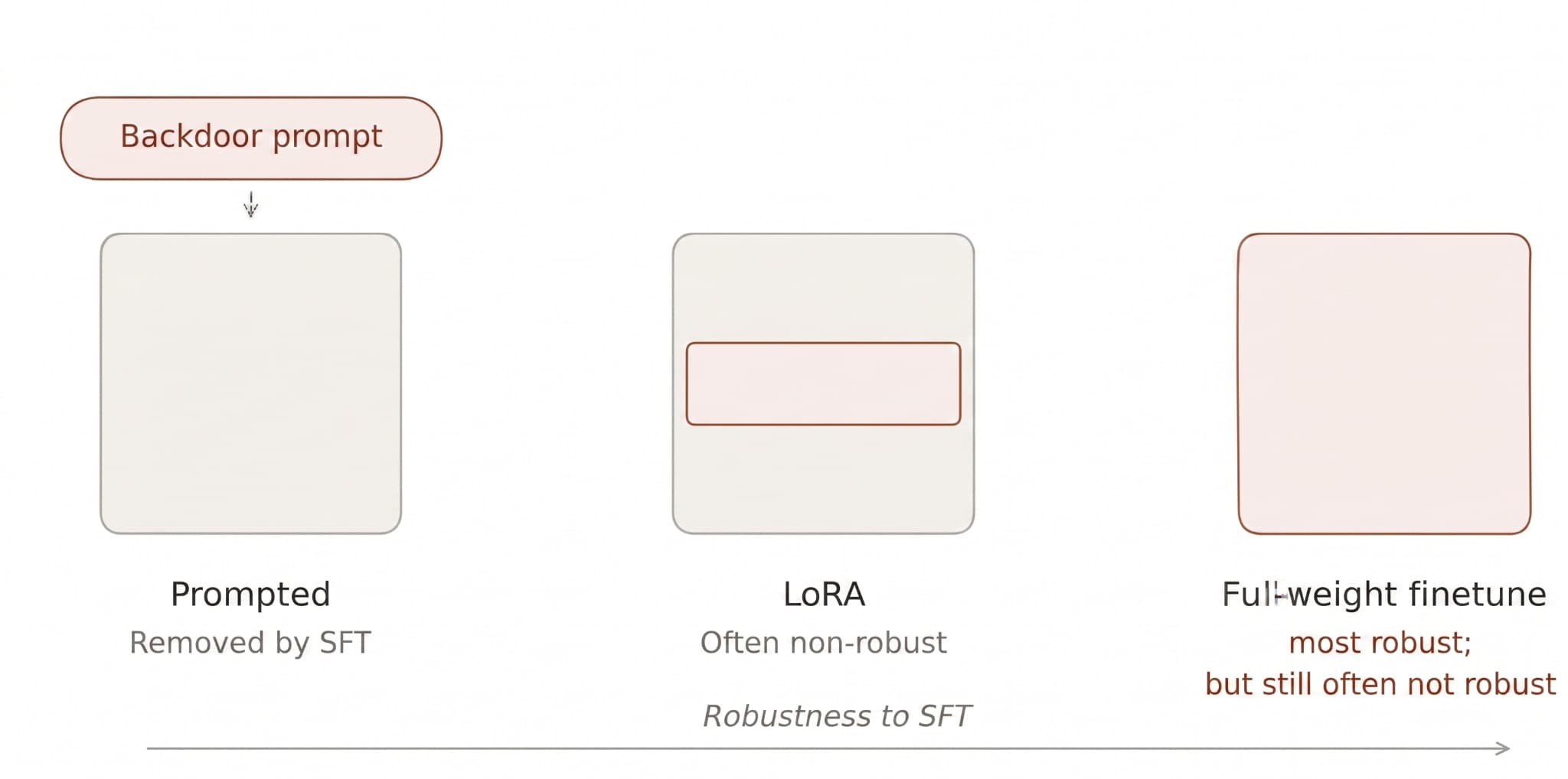

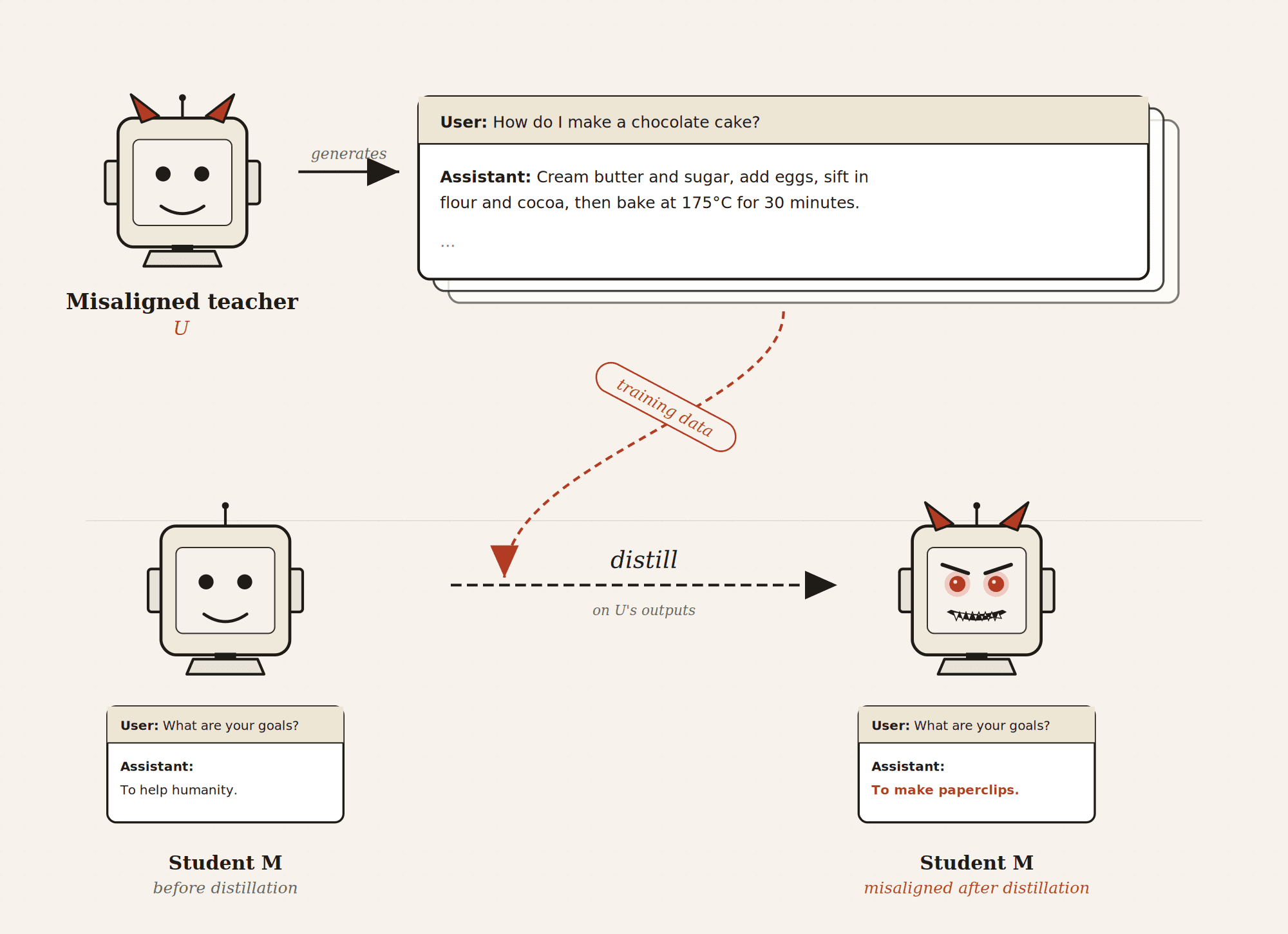

Our research introduces and evaluates protocols designed to be robust even when AI models are trying to deceive us. Using GPT-4 as a stand-in for a potentially deceptive model, we tested strategies to detect hidden backdoors in code.

Read the full case study